When The Norwegian Broadcasting (NRK) planned the television broadcast of the now ongoing Chess Olympiad 2014 in Tromsø, Norway, we encountered a challenge: How do we mix video, graphics and the results of many ongoing chess games simultaneously? The solution included a rack full of mini-switchers, open source, HTML5 code still in beta – and a bunch of $80 miniature computers.

https://www.youtube.com/watch?v=N13BwuYE054

Illustration showing how various elements in the broadcast from each match is put together.

Chess TV?

With Magnus Carlsen rising as a star in the chess world, interest in the game increased dramatically here in Norway. In Carlsen’s World Cup encounters against Viswanathan Anand in Chennai, India, last year we found a formula that also made the game exciting to follow on TV. It consisted of an image of seated athletes, in addition to a graphical representation of the chess board along with the computer’s calculation of the position – everything displayed on top of background graphics.

In the studio we had experts who explaining the game – both verbally and through a graphical analysis board – and answered questions from the presenter and audience via email and social media (using #NRKsjakk).

Chess Olympiad bound for Norway

For the Chess Olympics that would be held on home soil in Tromsø, we wanted our broadcasts to build upon the formula we discovered during the World Cup in India. While the game remained the same, the challenge for us as a broadcaster changed immensely. In Chennai there was one game at a time, in Tromsø there would be around 650 simultaneously. In addition, it’s not the individual games that determine who wins, but the games of all four players on each national team, where all games count.

For the Chess Olympics that would be held on home soil in Tromsø, we wanted our broadcasts to build upon the formula we discovered during the World Cup in India. While the game remained the same, the challenge for us as a broadcaster changed immensely. In Chennai there was one game at a time, in Tromsø there would be around 650 simultaneously. In addition, it’s not the individual games that determine who wins, but the games of all four players on each national team, where all games count.

Challenging images

A challenge for us when it came to images, was that the players are seated very close to each other in a gigantic hall, with the audience in close proximity to many of the tables. This means it is often packed with spectators in front of tables. Although the games should be seated in a silent hall, players still are able to leave their seat and walk around between the tables.

This makes it complicated to film with big manually operated cameras we traditionally would use. Mainly because it is difficult to find positions that can cover many games, but also because we do not have any guarantee that it will not be any audience, judges, officials or players standing in the way of our cameras.

Our plan was thus adjusted to use small unmanned wide-angle cameras mounted right next to each of the tables.

Comprehensive visual mixing

When we began the work, our editorial plan was to always film Norway’s first team in both the women’s class and the open class – in addition to the two nations that at all times was in the top of the table. A total of 4 caps à four games, which required 16 cameras in place at the tables.

Additionally we wanted to wrap the images from all of these 16 cameras into the existing graphics we had developed for the broadcasts from India. To name a few things, we wanted to show automatically updated chess boards and information about the position of the other games in the national cap on top. This quickly made it a very complex visual production, where we constantly had to mix a lot of different graphics and videos together.

During the World Cup in Chennai, we used a graphics generator from VizRT for the graphic elements. VizRT are used in most of our productions and a lot of people at the NRK work with both set-up and execution of the graphics system. The only problem was that their scedule was filled up with other pressing issues, such as the World Cup in Brazil, and they did not have the capacity to solve another complicated task as well.

To be clear: there is still a VizRT-server with an operator in the chess production. It is used for all graphics other than game-graphics. This includes the names of guests and presenters, twitter messages, viewing photos from Instagram, tickers and table displays.

Alternative solutions

At first, the idea was to use a computer with a webcam for each of the 16 games, then mix video images, background animation and results in software on each of them.

Afterwards the finished mix of images would be streamed to separate channels in our web player, so that the online audience would be able to choose which game they wanted to follow. This solution would also provide our outside broadcasting van (OB van) with 16 finished video sources composed of video, graphics and results. This would make the complex job of mixing all video signals much easier.

After thorough thinking we came to the conclusion that for our web-audience, it would be better to skip the stream of individual games, and spend our resources on building websites that could present all games in the championship via HTML in real time. This would also give the audience the opportunity to scroll back and forth in the moves and recall all the previous games in the championship. We started working on it immediately, and you can find the result on our website nrk.no/sjakk.

Simplification

In order to keep the TV program more lucid, we figured we should concentrate on one cap at a time, and at the same time took a decision to reduce the number of matches from four to three, by prioritizing only one top game each day.

Having made that decision, we were still in need to combine videos from the 12 games with graphics and results before it was passed on to OB van.

Only for TV

Since the product we were creating only would be used on TV, it was less natural to solve the task using basic web technology. We began to look for solutions where we could stick to our production format for television, which is 1080i50 via HD-SDI.

(foto: NRK)

Better camera

When it came to cameras, the Canon XA25 seemed like a perfect fit. They are small handycams that in addition to the usual HDMI output also outputs HD-SDI. Also practical was that we already had a lot of these cameras at the NRK.

From Sweden with help

In order to execute the graphics and put it all together, one of the software solutions we looked at was CasparCG. This is an open-source graphics and video playing server, developed by our colleagues at Sweden’s Television. It runs on powerful Windows 7 PCs with an Nvidia graphics card and supports HD-SDI in and out via a Decklink PCIe card.

(Photo: Blackmagic design)

Adobe Flash?? In 2014???

A disadvantage to CasparCG is that it uses the now aging technology Adobe Flash to create all graphics that changes dynamically during production. In our case, this meant all text, chess pieces and the computer evaluation.

Belgian Beta

January this year a beta version of CasparCG 2.07 was released, and in it there was a new feature, developed by the flemish national broadcaster VRT in Belgium. It makes it possible to draw dynamic graphics in HTML5, with support for javascript and CSS.

This meant we more easily were able to use much of the underlying solution used on on our website nrk.no/sjakk, to deliver the results, and then write specific sites displaying them on TV.

Almost, but not quite

Although the CasparCG’s website warns against using the beta version in production, we chose to test it. The looping of the background animation and drawing of the dynamic graphics worked brilliantly, but a fly in the ointment was high CPU usage and that images from the cameras got an unacceptable long delay when passed through the server. At that point we had cameras and a good solution to generate graphics, but still had not figure out how to mix the two together.

Eureka

What we usually do to combine two HD-SDI sources together, is to use a switcher, and we soon found a solution.

Using four small switcher and the CasparCG server with a four-channel Decklink HD-SDI card, we could build a picture switcher matrix, where we only needed one computer to do the job instead of 12. The result is that we at any point can send any of the four games in one cup mixed with the appropriate graphics from CasparCG to the OB van. The downside is that in the van, we did not get the preview image of the other two countries fighting at once. As our plan was always to go via the studio before we changed to another game, it turned out not to be an issue.

Control

After this only one challenge remained: how would we control the shift between the different cups? To do that, we need to select a new camera input on all four switchers, as well as upload new html pages on the four channels on the CasparCG server. It must all be done at once, and preferably automatic, based on GPI pulses from the switcher to the van to simplify the execution.

We already had four affordable switchers from Atem available, so it was just to start testing them.

(Photo: Blackmagic design)

CasparCG is server software that is not directly operated, but controlled via a graphical client software or telnet commands over a network. Atem switchers are kind of similar, and can be controlled from a dedicated control panel or via software over ethernet.

Since all devices can be controlled over the network, we began our investigation there. The telnet commands CasparCG use are completely open, while the protocol to control the Atem switchers are not that simple. Luckily the internet is full of talented people, and Kasper Skårhøj from Denmark has written a library for Arduino to control such Atem switchers.

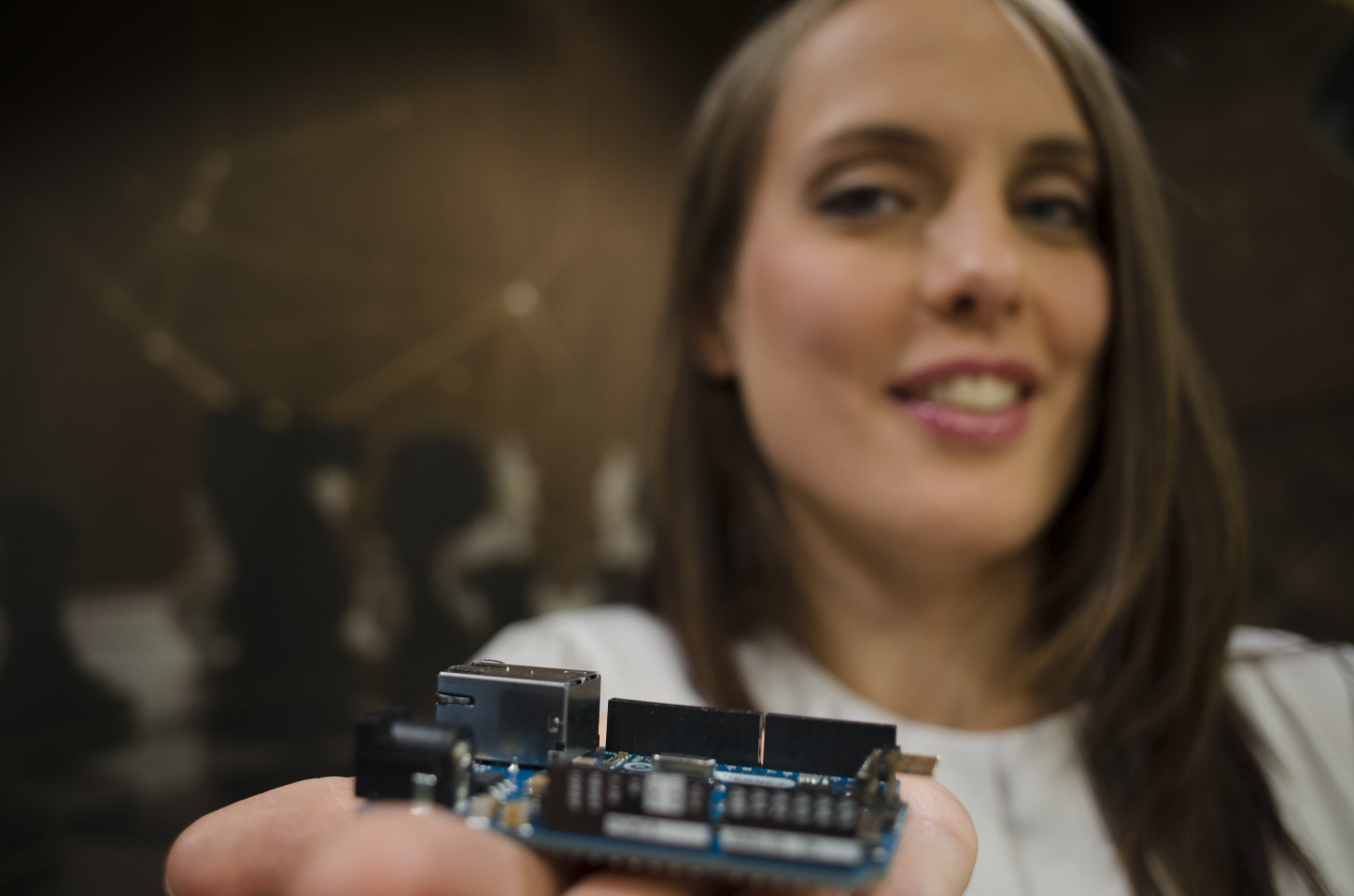

Arduino is an open-source electronics platform based on hardware and software that makes it easy for anyone to create interactive projects.

We soon found finished examples of how Arduino can connect to telnet servers, so this was promising. In theory we should now be able to connect GPI triggers from the OB van to the Arduino, and then connect it to the same network as the four Atem switchers and the CasparCG server.

Network Trouble

We began with testing the sample code provided with the library from Skårhøj. It worked 100% with one picture switcher. With two or more, things became very unstable. Another problem occured when we could not get the Arduino to run the telnet over http and the Atem over UDP simultaneously.

Separate networks

We came to the conclusion that we could solve it all by running each of the units in separate private networks. A master arduino, which receives the GPI triggers from the van, and communicates with the CasparCG server – in addition to four slave Arduinos communicating with each image switcher.

To solve this we had to get the arduino slaves to listen to the master via something other than the network. The solution was a very basic 2-bit bus between two of the digital pins on all five of the arduinos. When the OB van sends a GPI trigger to get game cap 1, the pins on the master Arduino sets to 00 , to get the second cap it is 01, and the third is 10. The slave arduino reads the pins continuously, and if they change, it sends a command to the Atem switcher to change the camera source. Since no output verification is sent back to the master Arduino, we built into every slave that it always reads back from Atem switcher which camera is active, compare it with 2-bit bus, and corrects it if they are not equal.

Testing

Finally, we had a prototype that did what we set out to accomplish. What remained was to make it production ready, mount and wire everything in a transport crate. And of course: test, test and test.

We wanted to make sure to uncover any weaknesses in both our own native code on Arduino, and in the CasparCG server (as mentioned still in beta version).

We therefore used an Arduino set up to simulate GPI triggers from the OB van with random intervals between 5 and 50 seconds, and let it run throughout June, without any errors occuring.

Back from vacation in late July, it was just to pack it all together and send the innovation to Tromsø, where the solution has been running like clockwork since its inception, and helped to make the broadcasts from the Chess Olympiad a great success.

Do you see any other uses of this combination of technology?

[…] English version: NRK breaks new ground to show chess on television […]

[…] NRK breaks new ground to show chess on television NRKbeta When The Norwegian Broadcasting (NRK) planned the television broadcast of the now ongoing Chess Olympiad 2014 in Tromsø, Norway, we … […]

En fantastisk produksjon! NRK kan med rette være stolt! Verdt lisenspengene alene!

NRK leverede en aldeles fremragende tv-dækning af «SJAKK-OL». Husk lige også at rose Nora T.B. Hun var fremragende!

En af de første dage interviewede hun en dame fra Sydafrika, fordi seerne skulle have noget at vide om, hvordan det var at rejse så langt for at skulle skak. I stedet for fik hun en masse reklame fra TEAM-Kasparov lejren. Det var bare så elegant, den måde hun hastigt afrundede det indslag.

[…] their blog you can find a long and nice post about how they found the solution using Arduino Uno, Arduino Ethernet Shield and the library for […]

[…] their blog you can find a long and nice post about how they found the solution using Arduino Uno, Arduino Ethernet Shield and the library for […]

[…] låst dør. NRK har også hevet sitt eget svar i potten. La oss hvertfall krysse fingrene for at denne type teknologieksperimentering får […]

[…] When The Norwegian Broadcasting (NRK) planned the television broadcast of the now ongoing Chess Olympiad 2014 in Tromsø, Norway, we encountered a challenge: How do we mix video, graphics and the r… […]

[…] Un projet complet pour retransmettre une competition d’échecs sur la chaine norvgienne NRK nrkbeta.no/2014/08/13/nrk-breaks-new-ground-to-show-chess-on-television/ […]